Are predictions about AI’s impact on work useless? (April 2026)

Sign up here to get Career Futures in your inbox.

Welcome to the April edition of the Career Futures newsletter.

Are predictions about AI’s impact on work useless?

Spoiler: No. Not useless.

The labor market generates a lot of data, and it’s very much a worthwhile investment of time and energy to try and figure out what the patterns are and whether those patterns hold any predictive potential. And many entities, from academic researchers to massive consulting firms, are doing just that. There are many. There are so many.

Yet there’s not really a clear picture emerging. Why that is, and why predictions are still useful, is worth understanding, especially for those of us whose jobs involve helping other people navigate theirs.

Reason 1: Exposure is not displacement

Many studies try to quantify and predict occupational ‘exposure’ to AI. Most often, what such studies do is score occupational tasks (sourced from the US Bureau of Labor Statistics) on exposure to AI automation or augmentation. This is a reasonable place to start. Most researchers are careful to note that exposure, measured in this way, does not necessarily correlate with displacement risk. Yet that’s often exactly how AI exposure is interpreted in news stories (here is one example of researchers’ framing versus news framing).

News stories often overstate the correlation between AI exposure and displacement risk.

So it bears emphasizing: Exposure is not displacement! There are many factors beyond automation potential that complicate the story.

Cost is one. For example, it may be that it is more expensive for a company to attempt to automate work than to employ a worker. This is especially true for small businesses. Massage therapy robots exist, for example, but they are fantastically expensive. The economics may make sense for massive gym chains or luxury hotels, but most massage therapy practices are small and probably won’t be purchasing six-figure robots (even if they did, it’s not clear that their clients would even want this).

Expertise is another factor: It’s true that not all work tasks are created equal. You could automate 90% of an occupation, but if the ‘economically valuable’ work is that remaining 10%, and it’s not automatable, then that occupation may remain resilient. Consequence of error is yet another (and can be related to expertise): If the stakes of getting something wrong are high enough, full automation becomes unlikely no matter what. Commercial aviation is a vivid example: planes mostly fly themselves. But the consequence of failure is catastrophic, so it’s extremely unlikely we’ll ever get rid of pilots.

Autopilot handles most of a flight in commercial aviation, but it’s the parts that it doesn’t handle that make huma pilots irreplaceable.

And so on. It’s worth noting that these are dynamically complicating factors. They are all at play, which makes it hard to predict any occupation’s future trajectory.

Reason 2: New technologies replace and create jobs, but never equally

This particular reason might feel like a talking point from the powers that be: Just wait a minute, because AI’s gonna create tons of awesome new high-paying jobs! While I’d treat such claims with a healthy skepticism, what is true is that historically, technological advances have tended to create new kinds of work. Economist David Autor has found that roughly 60% of workers today are employed in occupations that didn't exist in 1940; in other words, the majority of employment growth over the past 80+ years came from technology creating new kinds of roles.

The personal computer and the internet are probably the closest comparisons we have to AI: both were general-purpose technologies that promised to reshape the nature of work itself. And both did, eventually, create enormous new economic sectors and categories of work. But the transitions were neither painless nor instant.

To return to Autor: his research is often cited as reassurance, but it actually tells two different stories depending on the time period. From 1940 to 1980, new work creation outpaced job destruction: technology was, on net, a job-creating force. But from 1980 to 2018, that flipped. Automation eroded twice as many jobs as it had in the previous four decades, and job destruction actually outpaced job creation. The two periods present two conflicting data points. This mixed historical record makes it tough to predict how the story will play out with AI: while it’s near-certain that jobs will be created, what’s not clear is how many, or whether they will be quality jobs.

Reason 3: Even clear signals are hard to attribute

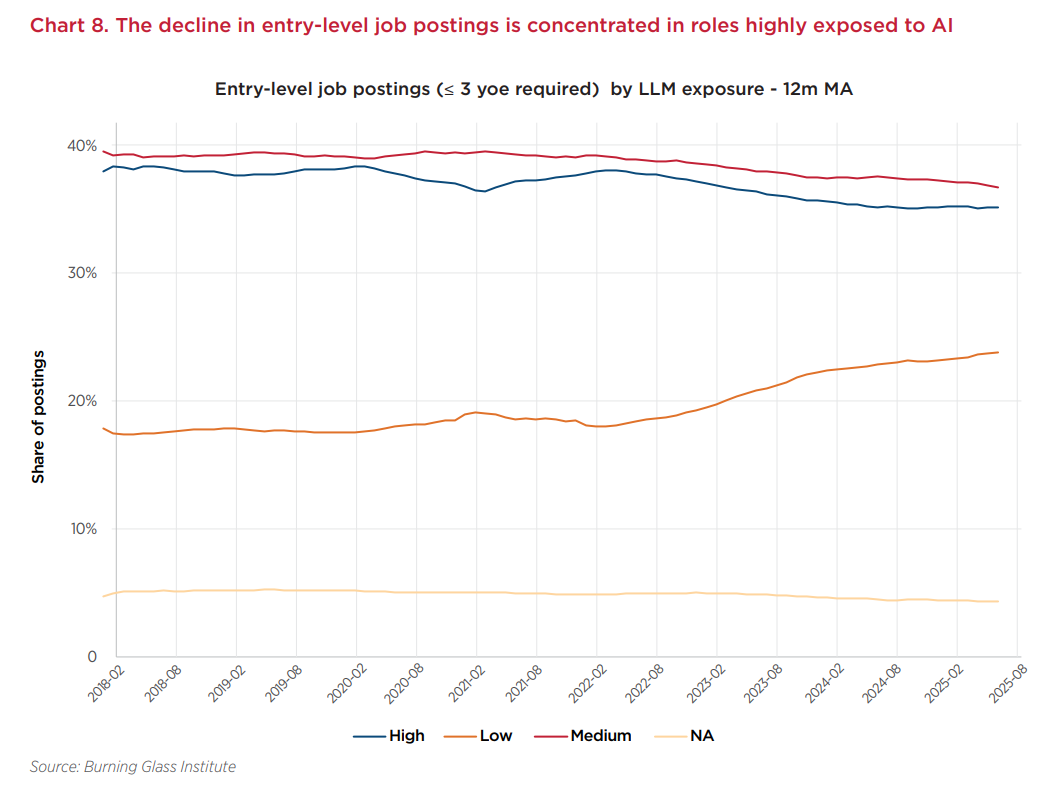

Right now, the labor market is not doing so well. Most sectors remain in a so-called ‘low hire, low fire’ stasis, and for entry-level knowledge workers, we may effectively be in a recession. The Burning Glass Institute, among others, has documented declines in junior-level job postings across knowledge work sectors, and unemployment among young workers in tech-adjacent occupations has ticked up noticeably. For recent grads trying to break into tech, finance, or marketing, the job market feels a lot worse than it did three years ago.

In the past few years, there’s been a steady erosion in demand for entry-level workers in occupations highly exposed to AI. But is AI the cause?

Is this because of AI? Most economists aren’t willing to go this far, because there are a number of other micro and macroeconomic factors at play: workforce reductions after pandemic-era over-hiring, rising interest rates, tariff uncertainty, geopolitical tensions & conflicts. These and others are probably all at play.

Does that mean AI is not impacting the labor market? Well, no, it almost certainly is, but it is difficult, at the moment, to disentangle AI’s impact from these other forces.

So, are predictions useless?

Not useless! While they may never be ironclad, they are useful as directional signals, particularly when considered together. The best ones are transparent about what they're actually measuring, and they surface new questions that push the research forward.

The big challenge for those of us in education and workforce development is not just in trying to understand these signals ourselves, but in making them understandable and useful for the young people who are actively navigating a noisy, turbulent labor market. They deserve to have the most accurate, most up-to-date information available.

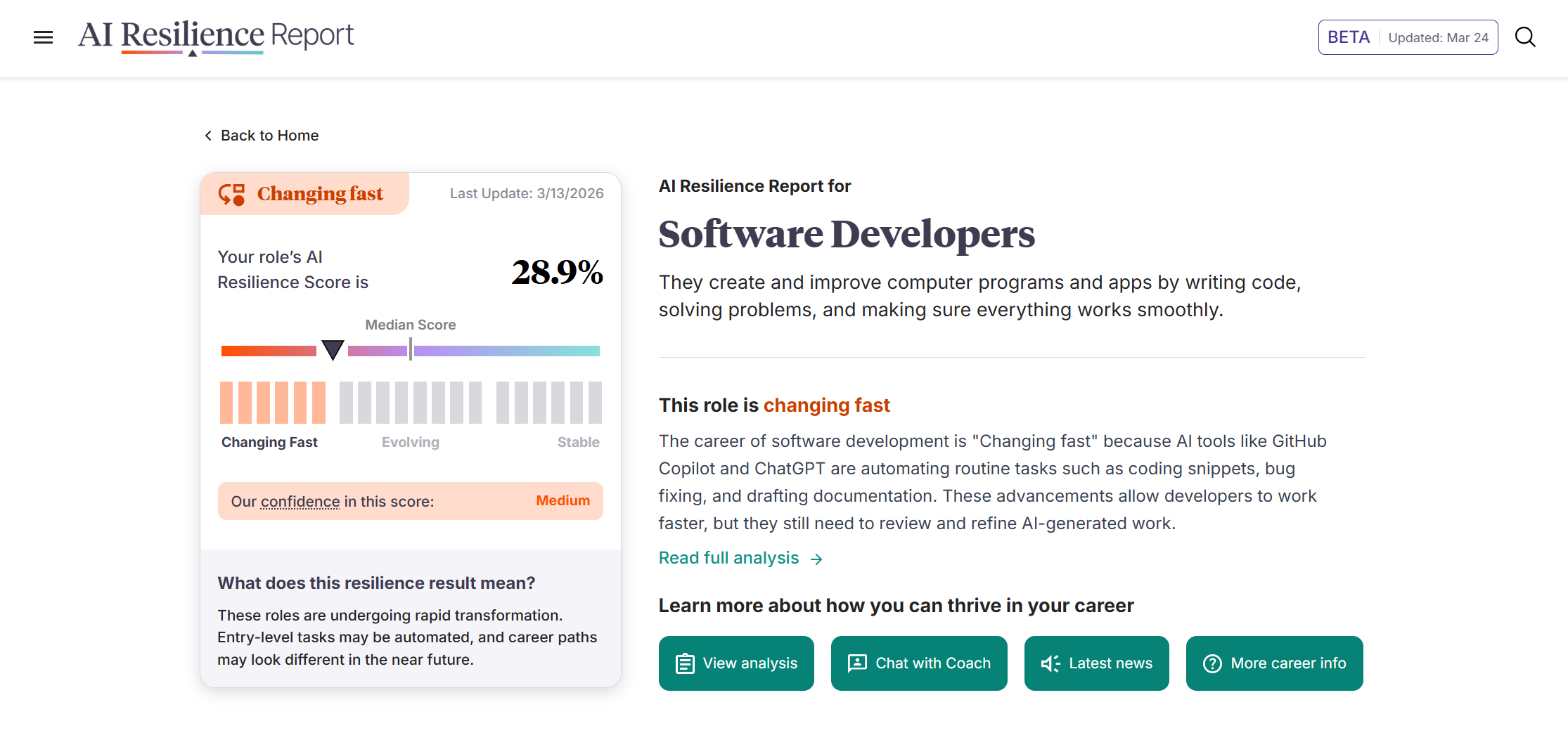

That's part of what we're trying to do with the AI Resilience Report project, which we rolled out in beta a few weeks ago. Rather than producing another standalone prediction, it brings multiple signals together–AI exposure data from Anthropic, Microsoft and others, long-term employment projections from the Bureau of Labor Statistics, and recent research on wage compression and worker agility from independent researchers–and contextualizes them at the occupation level.

The AI Resilience Report, now in beta, provides occupation-level snapshots by combining multiple signals with contextualizing analysis.

It is very much not a crystal ball, and we're still iterating on the methodology and adding data sources. But the goal is to close the gap between what the research says at a macro level and what a career advisor or job seeker can actually use.

Nobody is going to predict this thing with precision. The job is to help people make good decisions anyway.

What’s new with Coach and CareerVillage.org

Making Coach easier to deploy and trust at scale

March’s product update for Coach is not quite as flashy as February’s, but it does make Coach easier to deploy and manage. We updated assignments visibility so teams can stay aligned and avoid duplicating work, added automated reminder emails for pending classroom invitations to reduce onboarding friction, and improved performance on the login, authentication, and onboarding pages, among other changes. Expect some big updates in April, including proactivity functionality ,SMS capabilities, and a redesigned homepage.

Bonus: Our Director of Engineering wrote a technical blog post about Coach’s (impressive) regression testing approach, as part of the team’s efforts to ensure best-in-class reliability. It’s the first in a series of articles going under Coach’s hood.

AI for Career Development (AICD) Coalition grows

I mentioned this in last month’s newsletter, but now it’s *official*: the AICD has onboarded an additional 65 or so organizations, bringing membership to around 150 organizations of all sizes, and from all across the career and workforce development ecosystem. Additionally, we’re excited to officially announce that International Youth Foundation (IYF) has joined the steering committee. IYF brings a critical international perspective to the work the coalition is doing, and we will benefit greatly from it.

CareerNet, a new benchmark dataset built from real CareerVillage.org data

The Learning Agency, in collaboration with Renaissance Philanthropy and Schultz Family Foundation, released a dataset built from CareerVillage.org data and metadata. CareerNet, as it’s called, is “designed to advance research and improve AI-powered career navigation tools that guide learners in reskilling, computer science, and allied health pathways,” according to the announcement. We’re excited to see how the data is used and improved.

What I’m reading: Prediction edition

As we’ve put together the AI Resilience Report, we’ve been reading a number of research papers that attempt to measure and/or predict AI’s impact on work in various ways. These are a few of the more recent papers that are complicating earlier findings.

AI, Automation, and Expertise, Bouke Klein Teeselink and Daniel Carey

This new paper usefully complicates the ‘exposure = displacement’ narrative by measuring the expertise required for work tasks. Key finding: “Expertise-raising automation increases advertised wages.” (my emphasis)Task-Specific Technical Change and Comparative Advantage, Lukas Althoff and Hugo Reichardt

This paper introduces the concept of simplification: essentially, AI lowers the barrier for occupational entry. Key finding: “AI substantially reduces wage inequality while raising average wages by 21 percent. The equalizing force is simplification, compressing wage differences by enabling workers across skill levels to compete for the same jobs.”Measuring US workers’ capacity to adapt to AI-driven job displacement, Sam Manning and Tomás Aguirre

Manning & Aguirre introduce an adaptive capacity index measuring worker agility after displacement. Key finding: “We find that AI exposure and adaptive capacity are positively correlated: many occupations highly exposed to AI contain workers with relatively strong means to manage a job transition.”

Thanks for reading 👋

– Eric Fershtman, Director of Marketing

To get Career Futures in your inbox, sign up here.